Dr. AshourHomeResearchBlogContact

AI & Accounting Research

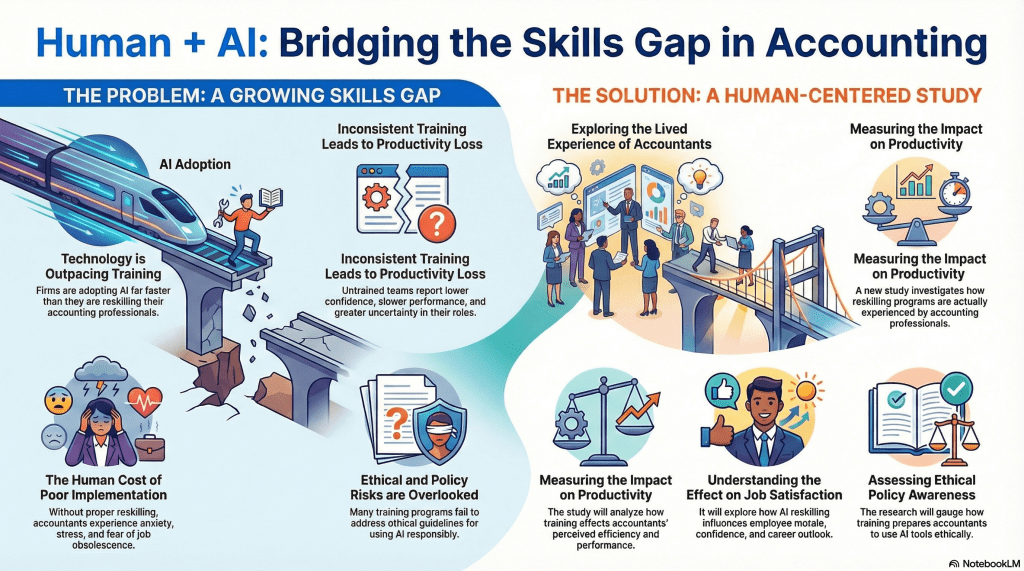

Human + AI: Bridging the Skills Gap in Accounting

Technology is moving faster than training. Here’s what the growing AI skills gap means for accounting professionals — and the human-centered solution that can close it.

Dr. Ashour· April 9, 2026 · 10 min read

3×Faster AI adoption

vs. reskilling pace

↓Confidence & performance

in untrained teams

4Key dimensions

measured in new study

∞Ethical risk when AI

policy training is absent

There is a quiet crisis unfolding inside accounting firms across the country. Artificial intelligence tools — built to automate reconciliations, flag compliance issues, accelerate data extraction, and predict audit risk — are being deployed at a pace that no training program has matched. The result is not a technology failure. It is a human failure: skilled accounting professionals left to navigate powerful new systems without the preparation, confidence, or ethical frameworks they need.

This is the central finding — and the central concern — of a new human-centered study examining how AI adoption is actually being experienced by accountants on the ground. Not how it looks on paper. Not what vendor demos promise. But what it feels like to be a trained financial professional whose job is changing faster than anyone is helping them adapt.

“Technology is outpacing training — and the human cost of that gap is being paid in anxiety, lost productivity, and ethical blind spots.”

The Problem: A Growing Skills Gap

Let’s be direct about what “skills gap” means in this context. It is not simply that accountants lack familiarity with new software. The gap is structural, psychological, and ethical — and it is widening with every quarter that AI capabilities advance faster than professional development programs catch up.

Technology Is Outpacing Training

Accounting firms — from national Big Four players to regional practices — are integrating AI into their workflows at an accelerating rate. Generative AI tools assist with client communication. Machine learning models scan general ledgers for anomalies. Robotic process automation handles repetitive data entry that once consumed entire teams. These are genuinely transformative capabilities.

But the investment in deploying these tools has not been matched by investment in preparing the people who must use them. Firms prioritize the license agreements over the learning curves. The result: accounting professionals are handed access to sophisticated systems and expected to figure it out — while still meeting client deadlines, billing their hours, and maintaining the professional standards the public depends on them to uphold.

Inconsistent Training Leads to Productivity Loss

When training does exist, it is inconsistent. Some team members receive structured onboarding; others receive nothing but a login credential. Some offices within the same firm have AI champions who guide adoption; others have none. This patchwork approach does not merely create knowledge gaps — it actively degrades team performance.

Untrained accounting professionals report measurably lower confidence in their outputs, slower performance on tasks the AI is supposed to accelerate, and greater uncertainty about their role and value in an increasingly automated environment. Paradoxically, the tools meant to make them more productive are — without proper training — making them less so.

⚡

Technology Outpacing Training

Firms are deploying AI tools far faster than they are building the reskilling programs to support the professionals who must use them daily.

📉

Productivity Paradox

Untrained teams report lower confidence, slower performance, and increased role uncertainty — the opposite of what AI adoption is supposed to deliver.

😟

The Human Cost

Without proper reskilling, accountants experience anxiety, chronic work stress, and genuine fear of obsolescence — damaging morale and retention.

⚖️

Ethical & Policy Blind Spots

Most AI training programs skip ethical guidelines entirely, leaving professionals unprepared to use AI responsibly or spot when it shouldn’t be trusted.

The Human Cost of Poor Implementation

Perhaps the most under-discussed consequence of the AI skills gap is its toll on the people inside the profession. Accountants who have spent careers building expertise in tax code, audit methodology, and financial reporting now find that expertise feels suddenly contingent. If a machine can do what they do — and they are not sure yet whether it can, or how well — what exactly is their professional identity?

This is not an abstract philosophical question. It manifests as workplace anxiety, elevated stress levels, difficulty concentrating, and a creeping fear of job obsolescence that affects not just performance but career commitment. The profession risks losing experienced professionals — not to AI replacement, but to burnout caused by the uncertainty that poor AI implementation creates.

Ethical and Policy Risks Are Being Overlooked

There is a third dimension of the skills gap that receives almost no attention: ethics. Accounting is a profession built on standards. GAAP. GAAS. Independence requirements. Fiduciary duty. The entire edifice of public trust in financial reporting rests on accountants’ commitment to those standards.

AI introduces new ethical terrain that most training programs simply do not address. When an AI flags an anomaly, who is responsible for the decision that follows? When a model trained on historical data makes a recommendation that discriminates — however inadvertently — across client demographics, what is the accountant’s obligation? When AI-generated analysis contains a confident-sounding error, how does a professional who was never trained to critically evaluate model outputs catch it?

These are not hypothetical risks. They are happening in firms today. And they are happening in a training vacuum.

The Research

The Solution: A Human-Centered Study

Recognizing that the AI skills gap cannot be solved by more technology, a new research initiative is taking a fundamentally different approach: it is asking the accountants themselves what is happening to them — and measuring the actual outcomes of structured versus unstructured reskilling.

This human-centered study is built around four core investigative dimensions, each designed to surface insights that technology-first analyses routinely miss.

🧭

Lived Experience

Qualitative deep-dives into how accounting professionals actually experience AI adoption — capturing what surveys miss.

📊

Productivity Impact

Measuring whether reskilling programs genuinely improve perceived efficiency and task performance across accounting functions.

👍

Job Satisfaction & Morale

Exploring how AI reskilling influences employee confidence, career outlook, and retention — not just output metrics.

📘

Ethical Policy Awareness

Gauging whether training prepares professionals to use AI tools responsibly, critically, and in alignment with professional standards.

Exploring the Lived Experience of Accountants

The study begins where all good research should begin: with people. Rather than measuring AI adoption through firm-level metrics — number of tools deployed, percentage of tasks automated, ROI per workflow — the research centers the subjective experience of accounting professionals navigating this transition.

This means asking different questions. Not “is your firm using AI?” but “How does it feel to use AI when you were trained in a world without it?” Not “has your productivity improved?” but “Do you feel more confident in your work, or less?” These questions yield insight that no dashboard can capture — and they are precisely the insights that translate into better training design.

Measuring the Real Impact on Productivity

The study also takes a rigorous empirical approach to productivity — but it defines productivity in human terms. The question is not simply whether tasks are completed faster. It is whether accounting professionals perceive themselves as more effective, whether they trust the outputs they are responsible for, and whether their cognitive load has increased or decreased as AI tools have entered their workflow.

This distinction matters enormously. A professional who completes a reconciliation 40% faster but feels uncertain about whether the AI-assisted result is accurate has not experienced a productivity gain — they have experienced a confidence deficit. The study is designed to surface and measure exactly these kinds of nuances.

What Good Reskilling Actually Looks Like

- Structured, consistent training rollout — not ad hoc tool access — across all team levels

- Explicit focus on when not to trust AI output, not just how to use it

- Ethics modules embedded into every AI training program, not offered as an afterthought

- Leadership modeling of AI uncertainty — normalizing “I don’t know if the model is right”

- Psychological safety to ask questions, flag concerns, and slow down when uncertain

- Regular reassessment of training as AI tools themselves evolve

Understanding the Effect on Job Satisfaction

One of the most critical — and most overlooked — outcomes of AI reskilling is its effect on how accountants feel about their jobs. The profession already faces retention challenges. Adding the anxiety of an AI transition without the support of structured training is a retention crisis waiting to happen.

The research is designed to measure whether quality reskilling programs improve not just professional competence, but professional identity. Do trained accountants feel that AI makes them more valuable — extending their expertise into new domains? Or do they feel replaced, marginalized, or uncertain? The answer to that question will shape the future of the profession more than any particular AI tool.

Assessing Ethical Policy Awareness

Finally — and this is perhaps the study’s most distinctive contribution — the research will assess whether and how reskilling programs build genuine ethical AI literacy. Not compliance checkbox literacy. Not a list of rules to memorize. But the deep, practical understanding of AI limitations, biases, and failure modes that allows an accounting professional to exercise genuine professional judgment when working alongside AI systems.

In an era when regulators are increasingly scrutinizing AI use in auditing and tax, this is not an academic concern. It is a professional liability question. Firms that train their professionals in ethical AI use are managing risk. Firms that do not are creating it.

Implications

What This Means for Accounting Firms — and the Profession

The implications of this research extend far beyond academic interest. For firm leaders, the findings will offer concrete, evidence-based guidance on how to design reskilling programs that actually work — not just programs that look good on a training calendar.

For accounting professionals themselves, the research validates what many already intuitively know: that the anxiety, confusion, and uncertainty they feel in the face of AI is not a personal failing. It is a systemic failure of implementation. And like all systemic failures, it has a systemic solution.

For policymakers and professional standards bodies — the AICPA, state boards of accountancy, the PCAOB — the study offers an evidence base for developing AI-specific professional competency standards. The accounting profession has always evolved alongside new tools and new demands. The challenge of AI is not fundamentally different. What is different is the pace — and the stakes if the profession fails to respond with the urgency the moment requires.

“The accounting professionals who will thrive in an AI-augmented world are not the ones who resist the tools — nor the ones who accept them uncritically. They are the ones who are properly trained to work alongside them.”

Bridging the Gap: The Path Forward

The bridge metaphor at the heart of this research is not accidental. Bridges are built deliberately, engineered precisely, and maintained continuously. They do not appear simply because traffic demands them. The same is true of the bridge between human expertise and artificial intelligence in accounting.

Building that bridge requires investment — in training infrastructure, in time, in the psychological safety that allows professionals to admit uncertainty and ask for help. It requires leadership commitment that goes beyond deploying the tools and assumes responsibility for the people who use them. And it requires the kind of honest, rigorous, human-centered research that this study is designed to deliver.

The skills gap in accounting is real. Its human costs are real. But so is the opportunity: to build a profession that is stronger, more capable, more ethically grounded, and more resilient precisely because it chose to bring its people along on the journey — not leave them behind.

AI in AccountingSkills GapReskillingAccounting TechnologyCPA TrainingAI EthicsTax TechnologyProductivityWorkforce DevelopmentFuture of Accounting

Is your firm ready for the AI transition?

Dr. Ashour works with accounting firms and tax professionals to design evidence-based AI reskilling programs — practical, ethical, and built for real teams

Author & Researcher

Dr. Ashour

Dr. Ashour is a researcher and consultant specializing in the intersection of artificial intelligence, professional workforce development, and accounting practice. His work focuses on the human dimensions of technology adoption — studying not just what AI can do, but what it does to the people who must work alongside it. © 2026 Dr. Ashour · All Rights Reserved · drashour.com

Leave a comment